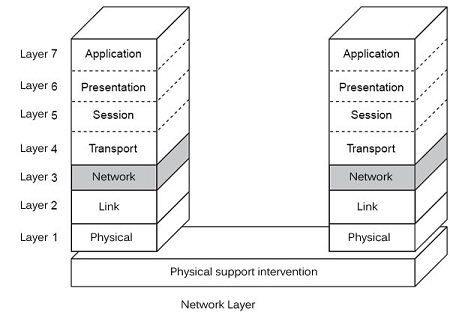

The function of the network layer is to provide an end-to-end communication capability to the transport layer, which lies above it as shown in Figure. The OSI reference model specifies that the transport layer need not know the method by which the network layer performs communications.

The main difference between the data link layer and the network layer is that the data link layer provides a reliable node-to-node service between adjacent nodes and the network layer provides a reliable end-to-end service between the source and the destinations. If a station has some message to send, it splits that message into a number of small addressable units called packets and then transmits them.

The network layer protocols are concerned with the exchange of packets. To send packets between the source and destinations, the network layer must know the topology of the network. The following are the functions of the network layer.

This layer exists when the network uses a packet level. The functions defined by International standardization in the framework of the reference model are:

• Setting up network connections to route packets. This function is but not an obligation in the packet level, and it is perfectly conceivable to have a connectionless. If the X.25 standard is connection mode, IP, in contrast, works in connectionless mode.

• Supports packet routing and gateways problems for reach another network.

• Multiplexing network connections.

• Supports the segmentation and clustering.

• Packet loss detection and recovery of lost packets. This function is not Mandatory packet level. IP, for example, level protocol packet has no way to recover lost packets.

• Continuation of sequence data provided to the upper layer, but is not an obligation.

• Flow Control, so there is no overflow memories in charge packet transmission.

• Transfer of explicit data (not mandatory).

• Resetting the network connection (not mandatory).

• Quality of service (not mandatory).

• Managing the network layer.

We’ll be covering the following topics in this tutorial:

Services to Transport Layer

The network layer performs two types of services for the transmission of packets. They are virtual circuit and datagram services. A virtual circuit is a connection-oriented service and datagram is a connectionless service. In the connection-oriented approach, a complete path is formed between the source and destinations before the transmission started. In the connectionless case, each packet transmitted with the destination address. The path between the source and destination may be different for different packets.

The Virtual Circuit Method

To provide a connection-oriented service, virtual circuits used. Each node maintains a table for all virtual circuits passing through it. There can be more than one virtual circuit passing through a single node. All packets belonging to a single virtual circuit pass through the same route. Each packet contains a virtual circuit number field in its header apart from the sequence number and checksum fields. When a packet received at a node, the node determines the input line through which the packet arrived and also its virtual circuit number. Based on this information, the packet forwarded to the next node. A model network, with the virtual circuit tables for a single node.

The virtual circuits were passing through node A and node B in the model network. Three virtual circuits are passing through node A. The virtual circuit ABCDE gave number 0, virtual circuit ABGD gave number 1, and AFGC is virtual circuit number 2. denotes the virtual circuit table for node A. Note that all the virtual circuits passing through node A not shown in the table for the sake of clarity. Each entry in the virtual circuit table contains four fields. The first two fields used for the virtual circuit link used by the incoming message, and the third and fourth fields used for choosing the best outgoing virtual circuit link for sending the packet. Whenever a packet arrives at the node, the node selects one of the outgoing virtual circuit numbers from the virtual circuit table and sends the packet through it.

In the above diagram, if station 51 is willing to send a packet to station 55, it sends the packet to node A. Node A may search for a suitable virtual circuit number from the right-hand side of its virtual circuit table. it selects the first entry and transmits the packet to node B with virtual circuit number 0. If that link is already in use, it selects the next available entry for transmitting the packet to Node B. Node B may select either the same virtual circuit number or a different virtual circuit number, depending on the availability of the virtual circuit at that time for sending the packet to node C. Hence, the packet uses different virtual circuit numbers at different nodes during the transit. A call set up procedure is performed before actual transmission starts, to establish a virtual circuit. Once the virtual circuit is set up, all packets transmitted through the same virtual circuit. There can be more than one virtual circuit passing through a single physical link.

Routing

The function of a network layer is to route packets from the source machine to the destination machine in a network. The network layer uses special software called a routing algorithm. This software selects a specific route for a sequence of packets if the virtual circuit use or independent routing decision is taken for every packet if datagram service use. In this section, some essential routing algorithms used by the network layer for packet routing discuss.

Routing algorithms can group into two major categories. They are non-adaptive routing algorithms and adaptive routing algorithms. The non-adaptive routing algorithms do not use the current traffic conditions or the network topology at the time of routing. However, adaptive routing algorithms use the change in traffic condition and topology at the time of routing to make the best routing decision.

Adaptive algorithms can further classify into the following three categories:

• Centralized routing: Information collected from the entire network before making an optimal routing decision.

• Isolated routing: Only the local information around the node is used to perform routing

• Distributed routing: Mixture of local and global information is used to perform routing.

Optimality Principle

If router J is in the optimal path from router I to router K, then the optimal path from J to K also falls along the same route.

To see this, consider the part of the route from I to J as r1, and the rest of the route as r2. If a route better than r2 exists, then it can be concatenated with r1 to improve the route from I to K. This contradicts the earlier statement that rlr2 is optimal.

Because of optimality criteria, it is also possible to construct a tree from all sources to a given destination. If the path chosen from each source is optimal, the resultant tree is known as a sink tree or a spanning tree. The distance metric used is the number of hops.

The goal of all routing algorithms is to determine the sink trees for all the routers. Since the sink tree does not contain cycles, packets delivered in a finite amount of time. As a network is susceptible to link or node failure, it is also required to consider the current network topology and the traffic conditions to avoid the problems associated with node or link failures. Different routing algorithms use a different strategy to avoid congestion.

Non-adaptive Algorithms

These algorithms are also known as static routing algorithms. The routing decision is made statically, not based on the current traffic condition and the network topology.

Shortest path algorithm Dijkstra proposed this algorithm in 1959. The key idea is to construct a graph for the network, with each node on the graph representing a router. Each arc represents a link.

To find the route between the pair of given nodes, the algorithm finds the shortest path between the nodes. The path length is measured from the number of hops or hop distance. All the links are labeled, based on any one of the above criteria. In the most general case, labels computed as a function of the distance, mean queue length, measured delay, regular traffic, communication cost, and other factors. By changing the weighting function, the algorithm computes the shortest path.

The algorithm Each node is labeled with the distance from the source. The following are the steps used in the algorithm to determine the shortest path between a given pair of nodes.

• Each node labeled with its distance from the source node along the best-known path.

• Initially, all nodes are marked with infinity, as no paths are known.

• The source node marked as permanent.

• All nodes adjacent to the source nodes are examined and labeled with the distance from the source node.

• Each adjacent node temporarily labeled with the distance from the source node as a label.

• After all the adjacent nodes are labeled, the node marked with the lowest label marked as permanent. This node becomes the new working node.

• As the algorithm proceeds, if an intermediate node, already labeled encountered, the algorithm computes the sum of the label on the current working node and the distance from the labeled node.

• If the sum is less than the label on the node, the node relabeled.

• The above steps repeated until the destination node reached.

Flooding It is another non-adaptive algorithm. Every incoming packet is sent out on every outgoing line except the one through which it arrives. Hence, all possible paths from source to destination tried in parallel. Flooding always chooses the shortest path. The main problem with flooding is that it generates a large number of duplicate packets at the destination. Therefore, it is required to dump the process. One method is computing the total number of hops along the path and sending it as a count with the packet. This value decremented at each node visited by the packet. As soon as the count reaches zero, the packet discarded. Another variation in Hooding is known as selective flooding, in which, rather than sending the packet to all outbound links, the packet is sent only on selected lines in the right direction. Flooding is not an efficient routing scheme, but it still has some use in military and distributed applications.

Flow-based routing The non-adaptive algorithms discussed so far considered only the network topology. They do not consider the network load. Shorter path lengths contain links that always encounter heavy traffic. It is wise to select a path with longer length but with less traffic. This approach is called flow-based routing. In a static routing scheme, it is required to calculate the mean packet delay on each line. In networks with predictable traffic, it is possible to perform a statistical analysis to compute the mean packet delay. The network topology, traffic pattern, and various link capacities are the information required to perform this.

Adaptive Routing Algorithms

These algorithms are also known as dynamic algorithms. Routing is done, based on the current network traffic.

Distance vector routing In this routing scheme, each router maintains a routing table that contains the best-known route to each destination. These tables are updated dynamically by exchanging this information with all neighboring routers.

The routing table contains one entry for every router. Each entry consists of two parts. The first part is the preferred outgoing line, and the second part is the estimate of the best time or the shortest distance to the destination. The metric used may be the distance or queue length or delay. The router is assumed to know the distance to the various destinations. If the metric is queue length, the router examines each queue. If the metric is a delay, the router sends a particular test packet called echo packet to the receiver, gets it back, and examines the turn around the delay.

Consider that distance used as a metric. Each router sends the routing information to all nearby routers for every T millisecond. Assume that router A receives a distance vector from a nearby router B. Each entry in the distance vector Bi denotes B’s measure of distance to the router i. If router A knows the distance to Bas d, then the new distance to router i computed as d +B;-By collecting the information from all the routers, A can estimate the best possible routes to all destinations. The old routing information of A is not at all considered for this new estimation.

This routing technique is not proved efficient, practically because of the slow nature in propagating the wrong route information to various routers. If a router knows the best route, it propagates it rapidly to all others. On the other hand, the failure of nodes not recognized immediately. Some ad-hoc solutions are used to rectify the above-said problem. Another issue associated with distance vector routing is the ignorance of line bandwidth information.

Link state routing This routing scheme is developed to replace the distance vector routing algorithm.

The key idea behind this algorithm stated as follows:

• Each router collects the addresses of all neighboring routers.

• The delay or cost to each of its neighbor is measured.

• A packet constructed with all the collected information.

• This packet sent to all other routers.

• The shortest path to every other router is computed, using the shortest path routing algorithm discussed earlier.

The algorithm works in the following way:

In step 1, the router sends a particular ‘hello’ packet on each point-to-point line to all nearby routers.

It collects the addresses of all routers as a reply.

Step 2 is the calculation of delay for a specific router by sending a particular echo packet to the neighboring router and getting a reply immediately. The delay is one half of the turnaround time. This experiment is performed several times to compute the average delay.

In step 3, a packet is constructed with sender’s address, followed by a sequence number, and a list of neighboring node addresses. For each neighboring node, the delay also gave.

Flooding algorithm, one of the static algorithms, is used for distributing the packets to all other routers. It completes step 4.

In step 5, Dijkstra’s algorithm is used to construct the shortest path.

Hierarchical routing As the network size grows, the size of the routing table also grows proportionately large, and the router memory consumed with larger sized tables. Another problem is the amount of CPU time necessary for scanning the table. At one point, it is not feasible for the router to perform routing. The hierarchical approach eliminates the problems mentioned above. In the hierarchical routing scheme, the routers divided into regions. Each router knows how to perform routing in its region, but nothing about the internal structure of other regions. For vast networks, two-level hierarchy is not sufficient. Hence, regions grouped into clusters and clusters are grouped into zones, and zones into groups and so on. This enhances the significant reduction in the size of the routing table to maintained in the router memory. The routing table contains an entry for all possible routes for the local region and one entry for every other region. As the ratio of several regions per number of routers increases, the size of the table also grows. The only drawback with this routing is increased path length.

Broadcast routing used for sending the message simultaneously to all other nodes in the network. One method of performing broadcast routing is a simple transmission of a packet to each destination. This method requires high bandwidth and information about the entire network. The second method used to perform broadcast is flooding, which also suffers from the same bandwidth problem. The third algorithm is known as multi-destination routing. Here, each packet transmitted with all destination addresses. Whenever a packet is received, the node examines all the destination addresses. It selects the required set of outgoing lines and sends the same copy of the packet through each outgoing line. The packet contains all the destination addresses that connected through the respective outgoing line. Here, the number of destinations partitioned among the available outgoing lines. The fourth algorithm is called the spanning tree algorithm. A spanning tree is a network, connecting all nodes without any loop. If each node knows which of its outgoing line belongs to a spanning tree, it can copy the incoming packet to all the spanning tree links except the one through which it arrived. This algorithm makes excellent use of the bandwidth by generating the minimum number of packets. The only requirement is information about the spanning tree.

Multicasting Sending a packet to a subset of nodes of the network is known as multicasting or multicast routing. To perform this, the router first constructs a spanning tree, covering all the routers in the network. The source router the first node in the spanning tree. The router then computes a spanning tree with all the multicasting nodes. To perform this, it starts eliminating the links in the spanning tree that do not lead to any one of the nodes in the multicasting group.

Congestion Control

Another critical issue for the network layer is congestion. Every computer network supports a specific number of packets as the maximum limit. As the number of packets in a network keeps increasing, at some stage, the whole network dumped with packets. The number of packets present in the network exceeds the maximum number of packets; the network can support. This situation is called congestion. When such a situation arises, the routers are not able to cope with the packet rate the network demands and begin to lose packets. It further degrades performance. At very high traffic, the network comes to a standstill, and no packets are delivered.

Factors causing congestion

• When a sudden burst of packets arrives from several input lines and all packets, need to be routed through the same output line a queue is built up. If the buffer size is not enough to hold all of them, packets lost.

• With the large-sized buffer, the packet takes much time to reach the front of the buffer, gets time out and retransmitted, thus increasing the number of packets.

• Congestion also caused due to the slow processor and low bandwidth line. If the router’s CPU is slow, the queue is built up, causing congestion. Aligning the processor speed and line bandwidth can minimize congestion.

• Shortage of free buffer on routers may also cause congestion. This problem forces the router to discard packets. Hence, packets repeatedly retransmitted until an acknowledgment received from the receiver. It builds up the number of packets, hence resulting in congestion.

Congestion control and flow control The main difference between congestion control and flow controls that congestion control deals with smoothening the traffic in the whole network. It is a global issue. However, flow control deals with controlling the flow of information between a pair of nodes in a point-to-point channel network. In most occasions, flow control techniques applied when a fast sender is overriding a slow receiver.

Congestion Control Algorithms

Two classes of congestion control algorithms developed. Class one algorithm known as open loop algorithms, in which current traffic conditions not taken into account for congestion control. Class two algorithms called closed loop or fed back algorithms. These algorithms are efficient and widely used. There are three essential steps used. They are:

1. Monitoring the network to check the possibility of congestion.

2. Passing appropriate information about congestion to different transmitting locations.

3. Adjust the network operations to correct the problem that leads to congestion.

The following are some congestion control algorithms:

Traffic regulative algorithms As a sudden burst of traffic often causes congestion, regulating this sudden burst helps in minimizing congestion. ATM networks make use of this open loop approach. Here the average rate of transmission is regulated. Whenever a new connection is setup, the sending node must have an agreement with the carrier on a specific traffic pattern. The algorithm monitors the traffic rate. If the sender exceeds the agreed rate, the action is taken to avoid congestion. This strategy used for virtual circuit and datagram networks.

Turner (1986) proposed the leaky bucket algorithm. Each node connected to the network through a buffer. Nodes are allowed to put only one packet of fixed size per clock tick into the network. It avoids the origination of the sudden burst of data within the node. The buffer accommodates packets until the predefined limit reached. All remaining packets discarded. This algorithm smoothens the sudden burst of packets hence avoiding the chances of congestion. A slight variation in this algorithm is called the token bucket algorithm, which is a flexible algorithm. It throttles the packet transfer rate by considering the rate of arrival of packets for transmission. When the sudden burst of packets arrive, the output transfer rate proportionately increases and provides a flexible traffic pattern.

Feedback or closed loop methods

Congestion control for virtual circuit networks Once congestion starts, no more virtual circuits are permitted until congestion resolved or new virtual circuits are formed carefully around the congestion areas as shown in Figure. Reserving resources like buffer space in routers, line bandwidth in advance before setting up the virtual circuit also avoids congestion.

Choke packets This approach is used in both virtual circuit and datagram networks. The router monitors the utilization of each of its output lines and other resources. The output line enters into a warning state when the utilization exceeds a predefined maximum limit called threshold value — this warning state marked in each outgoing packet. The receiving end router checks the packet for a warning state if so it sends a choke packet to the source. The choke packet informs the source to reduce the data rate. The source reduces the data rate and waits for a particular duration to check it if congestion prevails; if not, it increases the rate again.

Weighted fair queuing Routers have multiple queues on each outgoing line, assigning one queue per source. The router scans the queues in a ’round robin’ fashion, selects one packet from each queue, and transmits it. This method gives equal priority to all hosts.

Internetworking

The discussions made so far deal with single stand-alone networks. When two are more networks are involved in an application, we usually refer to the mode of working between systems as internetworking. We use the term internetwork (or internet) to refer to a composite network consisting of one or more LAN /WAN /MAN. Each constituent network of the internet is a sub-network or a subnet. The role of the OSI network layer is to provide connectivity to different networks and provide useful interactions between these networks.

Dinesh Thakur holds an B.C.A, MCDBA, MCSD certifications. Dinesh authors the hugely popular

Dinesh Thakur holds an B.C.A, MCDBA, MCSD certifications. Dinesh authors the hugely popular